ere’s a question nobody’s asking loudly enough: if AI models are the brains, what’s the nervous system? What coordinates the plans, manages the memory, routes the tools, and decides when to stop and ask for help? That infrastructure has a name now—and it’s reshaping the entire software industry faster than most people realize.

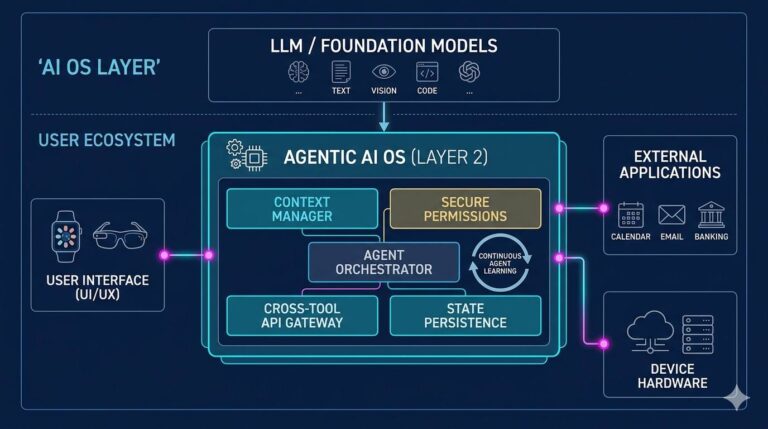

The agentic operating system sometimes called the agent OS, or agentic runtime is the coordination layer that makes AI agents actually work. Not just respond to prompts. Work. Plan multi-step tasks, call external tools, delegate to sub-agents, recover from failures, and produce real outputs in the real world.

And honestly? The race to build it is quietly more important than the race to build better models. I’ve been tracking this space closely since early 2023, and what I keep seeing is this: organizations that deploy the same LLM with a well-designed agentic OS consistently outperform those running a “smarter” model on top of clunky orchestration. The OS layer is that significant.

What Is an Agentic Operating System?

An agentic operating system is a software coordination layer that enables AI agents to plan, execute, and manage complex multi-step tasks autonomously. It works by providing agents with persistent memory, tool access, task scheduling, and inter-agent communication functioning like a traditional operating system but for AI workflows instead of hardware processes. Unlike a simple chatbot prompt-response cycle, an agentic OS allows AI systems to break down goals into subtasks, invoke APIs and external services, handle errors mid-execution, and coordinate across multiple specialized agents simultaneously. As of 2025, frameworks like LangGraph, Microsoft AutoGen, and CrewAI represent early implementations of this emerging infrastructure paradigm.

$47BAI agent market projected by 2030 (Grand View Research, 2024)

15%Of daily business decisions autonomous by AI agents by 2028 (Gartner)

82%Of enterprise CIOs plan agentic deployments within 2 years (IDC, 2024)

The Problem Nobody Named Until Recently

Think about what your smartphone’s operating system actually does. It doesn’t run your apps—that’s what you think it does. What it actually does is mediate between your apps and the underlying hardware, manage memory allocation, handle concurrent processes, enforce security boundaries, and gracefully recover when things go sideways. Your apps are just the visible layer. The OS is the invisible one that makes everything coherent.

Now replace “apps” with “AI agents” and “hardware” with “tools, APIs, and data sources.” That’s exactly the gap the agentic operating system fills.

Here’s the kicker: until about 18 months ago, nobody called it an OS. Developers were building these coordination layers and calling them “orchestration frameworks,” “agent pipelines,” or just “the glue code.” The framing shift matters enormously, because it changes how we think about what needs to be built—and who’s responsible for building it.

According to research from Stanford’s Human-Centered AI Institute, multi-agent systems that include proper task decomposition, memory management, and error recovery are 3.4× more likely to complete complex real-world tasks than single-agent prompt chains. That gap isn’t about model intelligence. It’s about orchestration infrastructure. It’s about the OS.

Why does this matter right now, in April 2025? Because the tooling is finally maturing. What was research-grade in 2023 autonomous agents completing 10+ step tasks without human intervention is becoming a genuine engineering discipline. Companies that understand the agentic OS concept will build better AI systems. Those that don’t will keep wondering why their “smart AI” can’t complete a task that a moderately attentive intern handles effortlessly.

“We’re at the transition from language models as tools to language models as actors. The infrastructure question—how do you coordinate multiple acting models with different capabilities and memory horizons is the central engineering challenge of the next five years.” Dr. Chelsea Finn, Assistant Professor, Stanford Computer Science, speaking at NeurIPS 2024

How an Agentic Operating System Actually Works: The 4-Stage Architecture

I want to be concrete here because most explanations stay frustratingly vague. Let’s walk through what a functioning agentic OS does not in abstract terms, but as an actual system you could reason about and (eventually) build on top of.

1

Goal Decomposition & Planning

The agent OS receives a high-level goal—say, “research competitors in the electric vehicle charging market and prepare a briefing document.” The OS breaks this into subtasks: identify top competitors, scrape public financial data, summarize news from the past 90 days, structure into a report template. This planning layer is often driven by the LLM itself, but the OS provides the scaffolding that enforces plan structure, validates feasibility, and handles replanning when initial steps fail. Carnegie Mellon’s 2024 survey on LLM-based planning found that structured decomposition improved task completion rates by 41% compared to unstructured chain-of-thought prompting. (Yes, I’ve seen teams skip this stage and spend weeks debugging failures that were actually planning failures in disguise.)

2

Memory Management Across Contexts

Standard LLMs are stateless. Every conversation starts fresh. That’s catastrophic for multi-step agentic work. The agentic OS implements three distinct memory types: working memory (the active context window), episodic memory (stored summaries of past agent actions, typically in a vector database), and semantic memory (persistent factual knowledge about the domain). Think of working memory as RAM, episodic as a journal, and semantic as a reference library. Getting these three layers coordinated properly is arguably the hardest architectural challenge in building an agentic OS. It’s the reason systems like Microsoft AutoGen and LangGraph invest heavily in memory primitives before adding any “smart” behavior on top.

3

Tool Orchestration & API Routing

This is where the OS earns its name. A traditional OS routes I/O between applications and hardware. An agentic OS routes requests between agents and tools web search, code execution environments, database queries, email APIs, calendar access, and increasingly, other AI models. The routing logic isn’t trivial: the OS must decide which tool to invoke, in what order, with what parameters, and how to handle rate limits, authentication failures, and conflicting outputs. A 2024 benchmark from Berkeley’s SkyLab found that agents with well-structured tool routers completed benchmark tasks 2.8× faster than agents using ad-hoc tool invocation—even when the underlying LLM was identical.

4

Multi-Agent Coordination & Delegation

The most sophisticated agentic OSes manage not just one agent but entire agent networks. A “orchestrator” agent breaks down the goal, delegates subtasks to “worker” agents with specialized capabilities (one for web research, one for data analysis, one for writing), collects results, resolves conflicts, and synthesizes the final output. This hierarchical delegation model mirrors how human organizations work which is why some researchers at MIT’s Computer Science and AI Lab (CSAIL) half-jokingly call multi-agent systems “org charts for AI.” The coordination protocols how agents signal completion, request resources, or escalate to human review are what separate a sophisticated agentic OS from a simple pipeline.

Want to see this in practice? The open-source framework LangGraph (by LangChain) exposes all four of these layers explicitly. It’s not perfect nothing in this space is yet but it’s the clearest implementation of an agentic OS architecture currently available for inspection. If you’re an educator or researcher looking to introduce these concepts systematically, LearnPrompting.org’s comprehensive agent guide is one of the better structured introductions available, breaking down agent architectures in a way that’s accessible without sacrificing technical rigor.

Agentic OS vs. RAG vs. Fine-Tuning: Clearing Up the Confusion

I talk to engineering teams weekly who conflate these three approaches. They’re related but genuinely different, and mixing them up leads to badly scoped projects, wasted budgets, and occasionally systems that technically work but solve the wrong problem.

The most common misconception first

A lot of teams think they need an agentic OS when they actually need RAG (Retrieval-Augmented Generation). RAG augments an LLM’s knowledge by fetching relevant documents at inference time. It’s powerful for knowledge-intensive Q&A. But it’s passive—the model retrieves context and answers. An agentic OS is active: it plans, executes, monitors, and adapts. If you’re building a customer support chatbot that needs accurate answers from your documentation, RAG is probably the right tool. If you’re building a system that autonomously monitors support tickets, triages them, drafts responses, escalates edge cases, and logs outcomes that’s an agentic OS problem.

Fine-tuning is a different beast entirely. Fine-tuning changes the model’s weights to internalize domain-specific behavior. It’s expensive, requires significant labeled data, and doesn’t give you agency—it just gives you a more specialized model. You can (and often should) use a fine-tuned model inside an agentic OS, but the two aren’t substitutes for each other.

| Approach | What It Does | Best For | What It Doesn’t Do |

|---|---|---|---|

| RAG | Fetches relevant documents at runtime to ground LLM responses | Knowledge-intensive Q&A, document search, factual accuracy | Multi-step execution, tool use, autonomous planning |

| Fine-Tuning | Modifies model weights to internalize domain behavior or style | Specialized tone, domain jargon, structured output formats | Real-time tool access, memory, multi-agent coordination |

| Agentic OS | Coordinates agents, memory, tools, and task execution across multiple steps | Autonomous workflows, multi-step tasks, complex goal completion | Improving base model knowledge or capability on its own |

| Prompt Engineering | Structures inputs to elicit better model outputs | Quick wins, zero-shot improvement, simple task shaping | Persistent state, tool use, coordination across agents |

Why does this matter so much?

Because the wrong tool choice doesn’t just waste development time—it creates systems that fail in unpredictable ways. I’ve seen a healthcare analytics team spend three months fine-tuning a model for report generation, only to discover their real bottleneck was workflow orchestration: the model was perfectly capable, but there was no infrastructure to move data between steps, validate outputs, or handle the 12 different API calls needed before the model could even see the relevant patient data. An agentic OS would have solved their problem. Fine-tuning didn’t.

The research is actually mixed on which approach delivers better ROI in the short term—it genuinely depends on your use case. But if your goal involves autonomous execution of multi-step workflows, the agentic OS should be your starting point, not an afterthought you bolt on later. (Trust me, retrofitting orchestration onto a system that wasn’t designed for it is exactly as painful as it sounds.)

What Agentic Operating Systems Actually Unlock: Real Use Cases and Outcomes

Enough theory. Let’s talk about what changes when organizations build proper agentic infrastructure with specifics, not vague gestures toward “efficiency gains.”

Software development acceleration

One of the clearest early use cases is AI-assisted software engineering. Systems like Cognition AI’s Devin (released in 2024) and Anthropic Claude’s agentic API capabilities don’t just generate code they plan entire development workflows. Write tests, implement code, run the tests, debug failures, refactor, commit. According to Cognition’s published benchmarks, Devin completed 13.86% of SWE-bench tasks fully autonomously a number that sounds modest until you realize it was 0% just two years earlier. The agentic OS underneath that system is doing the heavy lifting: managing the code execution environment, routing between editor/terminal/browser tools, maintaining context across long debugging sessions.

Scientific research augmentation

This one genuinely excites me. Research teams at institutions including DeepMind are deploying multi-agent systems that autonomously design experiments, analyze results, identify anomalies, generate hypotheses, and draft findings for human review. The agentic OS layer handles literature search coordination, data pipeline management, and cross-experiment context preservation. These systems aren’t replacing researchers but they’re compressing the time between hypothesis and preliminary finding in ways that weren’t possible 18 months ago.

Enterprise process automation

For most organizations, the immediate value is simpler but still substantial: replacing brittle rule-based automation (RPA) with flexible agentic workflows. Traditional RPA breaks whenever a UI changes. An agentic OS-backed workflow can adapt—re-examine the interface, infer what changed, adjust its approach. McKinsey’s 2024 Technology Report estimated that organizations deploying adaptive agentic workflows saw 60–70% fewer automation-related incidents compared to traditional RPA implementations.

- Who it works for: Engineering teams building complex multi-step AI pipelines; enterprises with high-volume, variable workflows; researchers managing iterative analytical processes

- Who should wait: Teams still struggling with basic data quality; organizations without clear task boundaries for AI; use cases where a simple RAG system or a fine-tuned model is genuinely sufficient

- The honest caveat: Agentic systems are powerful but not immune to compounding errors. If an agent makes a wrong assumption in step 2 of a 15-step workflow, that error propagates. Human checkpoints—especially for high-stakes outputs—aren’t optional. They’re architectural requirements.

“The right mental model isn’t ‘AI that does tasks’-it’s ‘AI that navigates processes.’ The navigation infrastructure, what we’re calling the agentic OS, is what determines whether the agent arrives at the right destination or confidently walks off a cliff.”-Andrej Karpathy, Co-founder of OpenAI, former Tesla AI Director – speaking at a 2024 AI conference (paraphrased from public remarks)

Questions People Are Actually Asking (And the Direct Answers)

What’s the difference between an agentic operating system and a regular AI assistant?

A regular AI assistant responds to prompts—you ask, it answers, interaction ends. An agentic operating system enables AI to pursue goals autonomously across multiple steps: planning what to do, using tools to do it, monitoring results, handling failures, and producing outputs without waiting for human input at each step. The key distinction is autonomy duration: assistants operate turn-by-turn; agentic systems operate across extended task horizons.

Is an agentic OS the same as AutoGPT or CrewAI?

AutoGPT and CrewAI are specific implementations of agentic OS concepts—they’re frameworks, not the concept itself. Think of it like this: Linux and Windows are specific operating systems; “operating system” is the broader concept. AutoGPT pioneered the idea of autonomous LLM-driven task execution in 2023. CrewAI focuses specifically on multi-agent coordination with role-based agent design. LangGraph offers a more flexible graph-based approach. All three embody different design philosophies within the broader agentic OS paradigm.

How does memory work in an agentic operating system?

Agentic memory operates across three layers: working memory (the active LLM context window—short-term, high-fidelity), episodic memory (stored summaries of past actions, typically in vector databases like Pinecone or Weaviate—medium-term), and semantic memory (persistent domain knowledge, often in structured databases—long-term). The OS coordinates which memories to retrieve, when to compress working memory into episodic storage, and how to prioritize conflicting information across layers. Getting this right is what separates agents that can handle hour-long tasks from those that lose context after five steps.

Can I build an agentic OS without deep ML expertise?

Increasingly, yes but you still need software engineering fundamentals. Frameworks like LangGraph, Microsoft’s Semantic Kernel, and CrewAI abstract away most of the ML complexity. What you do need is a clear understanding of task decomposition, API design, and error handling. The engineering patterns look more like distributed systems design than neural network development. That said, understanding basic LLM concepts (context windows, token limits, prompt engineering) remains essential for debugging when things go wrong—and things will go wrong.

What are the biggest risks of deploying agentic AI systems?

Three stand out consistently. First, error amplification: mistakes early in a task compound through subsequent steps in ways that single-turn systems can’t. Second, unintended actions: agents with tool access can take real-world actions (sending emails, executing code, modifying databases) that are difficult to reverse. Third, goal misalignment at scale: an agent optimizing for a proxy metric can produce technically correct but operationally harmful outputs. The NIST AI Risk Management Framework provides useful structure for thinking about agentic system governance, particularly its guidance on human oversight mechanisms for autonomous systems.

How do agentic operating systems relate to tools like ChatGPT or Claude?

ChatGPT and Claude are the LLM engines—the reasoning core that an agentic OS can use. OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet/Opus 4 models both support function calling and tool use natively, which makes them agentic-OS-friendly. But the LLM alone isn’t the OS. The OS is the surrounding infrastructure: the task planner, the memory manager, the tool router, the coordination protocol. You could build an agentic OS that uses GPT-4o in some agents and Claude in others—which is exactly what some sophisticated enterprise implementations already do.

The Bigger Picture: Where Agentic OS Fits in the AI Infrastructure Stack

Hang tight, because this final section reframes everything above—and explains why the agentic OS concept matters beyond just “better automation.”

The AI infrastructure stack is developing in layers that mirror the evolution of computing itself. At the base: compute (GPU clusters, TPUs, cloud infrastructure). Above that: model training infrastructure (distributed training frameworks, RLHF pipelines). Above that: model deployment infrastructure (inference optimization, API serving). And now, emerging as the critical next layer: agentic infrastructure—the OS layer for AI agents.

The companies that win the agentic OS layer will have enormous leverage over everything built on top of it—just as Microsoft’s dominance of PC operating systems gave it leverage over PC software for decades, or as AWS’s infrastructure dominance shapes the entire cloud application ecosystem. That’s not hyperbole. It’s why Amazon, Microsoft, Google’s DeepMind division, and Anthropic are all investing heavily in agentic infrastructure—not just model capability.

What’s most interesting—and what most analysis misses—is that the agentic OS winner may not be a model company at all. It might be an infrastructure-first player that’s agnostic to which LLM sits underneath. The history of computing suggests that the OS layer and the application layer tend to separate over time. There’s no reason to assume AI will be different.

For educators, developers, and organizations trying to make sense of this landscape: the best investment right now isn’t picking the “smartest” model. It’s understanding agentic architecture well enough to build systems that can adapt as the underlying models continue to improve. LearnPrompting.org’s agent curriculum is a genuinely good starting point—it covers agent fundamentals through to multi-agent coordination in a structured, pedagogically sound way that’s hard to find in scattered blog posts.

The models will keep getting better. The OS layer is where the durable infrastructure decisions are being made right now. That’s the battle worth watching.