There’s a moment every QA engineer knows intimately. It’s 11 PM. The release is scheduled for 6 AM. And you’re staring at a wall of failing test scripts not because the app broke, but because a developer renamed a CSS class. Again.

That specific, maddening frustration spending more time maintaining tests than actually running them is the problem agentic AI testing was born to solve. And it’s doing it faster than most people in the industry predicted.

But here’s what nobody’s telling you in those breathless LinkedIn posts: agentic AI testing isn’t just a shinier automation tool. It’s a fundamentally different category of technology. Understanding the difference matters — because buying the wrong thing right now could set your QA team back two years.

So let’s get into it. What is agentic AI testing, how does it actually work, who’s it for, and — honestly — what are the parts that still need work?

What Is Agentic AI Testing? (The Actual Definition)

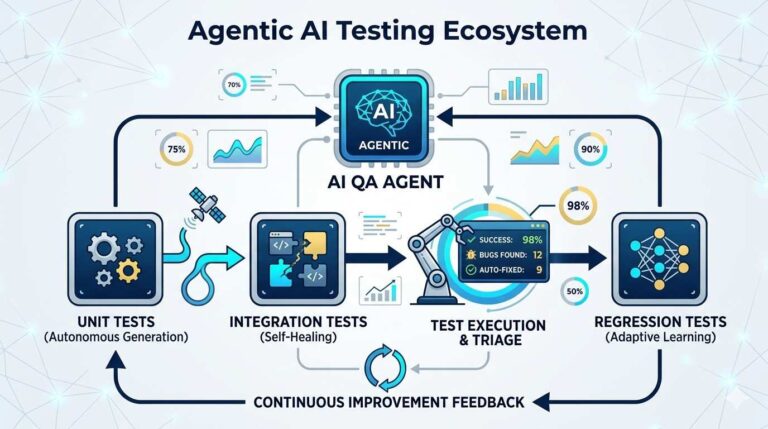

Agentic AI testing is a next-generation approach to software quality assurance where autonomous AI agents not scripts, not rules, not human testers following checklists independently plan, execute, adapt, and improve the testing process across the entire software development lifecycle.

The key word is autonomous. Not “AI-assisted.” Not “AI-augmented.” The agents genuinely perceive their environment, reason about what needs to be tested, take action, and then learn from the outcome. Think of it as the difference between a calculator (does what you tell it) and a junior QA engineer (understands what you’re trying to achieve and figures out how to get there).

According to UiPath’s technical documentation on agentic testing, the progression looks like this: traditional manual testing → automated scripted testing → AI-assisted testing → and now, agentic testing. Each stage didn’t just add speed. Each stage fundamentally changed the role of the human in the loop.

With agentic AI testing, that human role shifts from “person who writes and fixes tests” to “person who oversees and guides intelligent agents.” That’s a big deal and for a lot of QA professionals, it feels either exciting or terrifying, depending on which day you ask them.

Why Is Everyone Suddenly Talking About This?

Fair question. The concept of intelligent testing agents isn’t brand new. So why is 2024-2025 the inflection point?

Three things collided at once.

First, large language models got genuinely good. GPT-4 and its successors can now understand natural language requirements — “test that the checkout flow works for users with expired credit cards on mobile” — and translate that into executable test logic. That wasn’t possible at scale two years ago.

Second, the maintenance problem hit a crisis point. According to the State of Testing Report 2024, software teams spend an average of 60-80% of their test automation effort on maintenance — not on writing new tests, not on finding new bugs, just maintaining the tests they already have. That’s not sustainable, and frankly, it’s why a huge number of organizations abandoned automation efforts entirely.

Third, the data finally backs the promise. Tricentis, after introducing its Agentic Test Automation tool, reported up to 85% reduction in manual effort for initial test case creation and up to 60% productivity gains in internal testing and open beta programs. Those aren’t hypothetical projections. Those are real teams getting real results.

And the market is responding accordingly. The global agentic AI market is projected to grow from $5.25 billion in 2024 to $199.05 billion by 2034, representing a CAGR of approximately 43.84%. When you see numbers like that, it’s worth paying attention even if you’re usually skeptical of industry forecasts (I am, usually).

How Agentic AI Testing Actually Works: The 4-Stage Intelligence Loop

Here’s where most explainer articles get frustratingly vague. “The AI understands your app and tests it automatically.” Cool. But how?

Let me break it down into the actual mechanics.

Stage 1: Perceive Reading the Environment

Before an agentic system can test anything, it needs to understand what it’s working with. This isn’t just “scan the UI.” Agentic AI agents ingest multiple data streams simultaneously:

- Source code and code changes (via Git diffs)

- Functional requirements, user stories, and Jira tickets

- Historical defect data and production logs

- Live application state — what’s on screen, what APIs are responding, what’s in the database

Unlike static automation scripts, agentic AI is adaptive: it can plan, correct errors, and optimize itself without constant human intervention. The agents perceive requirements, code, or UI then reason about what to do based on rules, context, or learned patterns.

This multi-source perception is what makes agentic systems so different from Selenium or Cypress. Those tools see one thing: the DOM. Agentic systems see the whole picture.

Stage 2: Reason Deciding What Needs Testing

This is the part that still feels like magic, even after you understand it technically.

The agent doesn’t just run all existing tests. It reasons about what changed, what that change could have broken, and what test coverage is needed. It uses the same kind of contextual judgment your best QA engineer would use — except it can do it across thousands of files in seconds.

For example: if a developer pushes a change to the payment processing module, an agentic system doesn’t just re-run the payment tests. It traces dependencies, identifies which other features touch that module (user account history, invoice generation, refund flows), and generates or prioritizes tests for all of them. Automatically. Before anyone asks it to.

That’s not scripted logic. That’s reasoning.

Stage 3: Act -Executing Tests and Adapting in Real Time

Here’s where traditional automation falls apart and agentic AI holds firm.

Traditional test frameworks rely on brittle locators — specific CSS selectors, XPath expressions, element IDs. The moment a developer changes #login-btn to #auth-submit, three dozen tests break. Your Friday afternoon evaporates.

Agentic AI testing uses machine learning to understand elements contextually, the way a human tester would. Instead of looking for #login-button, an AI agent understands “the primary action button in the authentication section that enables user access.” When developers change the implementation but not the functionality, the AI adapts seamlessly.

This is called self-healing test automation, and it’s probably the single feature that gets QA teams most excited because it directly eliminates the maintenance nightmare that’s been draining their time for years.

Stage 4: Learn Getting Better Over Time

This is the stage that separates true agentic systems from marketing fluff.

Real agentic AI testing systems learn from every test run. Failed tests, edge cases, production bugs all of that feeds back into the model, making the next test generation smarter. The system builds a memory of your specific application: its quirks, its common failure modes, its high-risk areas.

Over time, the agent gets better at predicting where bugs are likely to hide. It stops treating all code equally and starts prioritizing intelligently focusing coverage where it matters most.

(This is also why implementation quality varies so wildly between vendors. Some systems genuinely learn. Others just slap “AI” on a script generator and call it agentic. More on that in the comparison section.)

Agentic AI Testing vs. Traditional Automation: The Honest Comparison

I’m going to be blunt here, because too many vendor blogs aren’t.

| Traditional Test Automation | Agentic AI Testing | |

|---|---|---|

| Test Creation | Manual script writing | AI generates from natural language / requirements |

| Maintenance | 60-80% of effort | Near-zero (self-healing) |

| Adaptation | Breaks when UI changes | Adapts automatically |

| Coverage Logic | What you tell it to test | Reasons about what should be tested |

| Learning | None | Continuously improves |

| Setup Cost | Moderate | Higher (initially) |

| Maturity | Battle-tested | Rapidly maturing (2024-2025) |

The honest caveat: agentic AI testing isn’t a drop-in replacement for everything you have today. For simple, stable CRUD applications with predictable UI, traditional automation is still cheaper and easier. Where agentic AI shines is in complex, rapidly-evolving applications microservice architectures, enterprise platforms, AI-powered apps — where the testing surface is too large and too dynamic for humans or scripts to keep up.

There’s also a real learning curve in managing agentic systems. Your best QA engineers won’t be writing less — they’ll be writing differently: defining test objectives, validating agent reasoning, interpreting coverage reports, and making judgment calls the AI can’t. It’s a skills shift, not a headcount reduction.

(No judgment if that last sentence made you nervous. It made me nervous too, the first time I really sat with it.)

What Problems Does Agentic AI Testing Actually Solve?

Let’s get concrete. Abstract benefits are easy. Let me give you the real-world scenarios where this technology pays off.

Problem 1: You’re testing AI-powered applications.

This is one the industry isn’t discussing enough. Autonomous agents are no longer experimental — they’re generating reports, handling customer requests, making business decisions, and integrating across enterprise systems. Traditional QA methods, built for deterministic code and predictable paths, aren’t equipped for this kind of complexity.

How do you write a test script for a system that reasons? You can’t test an LLM-powered feature the way you test a login form. Agentic testing approaches this differently evaluating judgment, reasoning chains, and contextual appropriateness, not just “did the function return the expected value.”

Problem 2: Releases are moving faster than your test suite.

If your dev team is shipping multiple times a week and your QA team is perpetually behind, agentic AI testing can close that gap dramatically. Organizations using agentic AI testing benefit from reduced manual test maintenance costs, shorter release cycles, and fewer defects reported by users.

Problem 3: Enterprise application complexity is exploding.

Enterprise applications deal with large volumes of concurrent users, complex workflows, and microservice architectures that have interconnected APIs, databases, and third-party services. Even a minor defect in one area can spiral into critical failures. Manual testing can’t cover this at scale. Traditional automation breaks constantly. Agentic AI was built specifically for this environment.

Problem 4: Security testing is too slow and too shallow.

Agentic AI testing enables adversarial prompt injections to test if prompts can bypass safety filters, contextual framing exploits to check if agents are following harmful instructions when assuming roles or changing contexts, and toxicity detection to flag biased or toxic outputs. For teams building consumer-facing AI features, this level of safety testing wasn’t practically achievable before.

The Realistic Challenges (Because Nobody’s Doing You Favors by Hiding These)

Here’s the part most vendor content conveniently omits.

Challenge 1: The 40% failure rate.

40% of agentic AI projects fail due to inadequate infrastructure foundations. Not bad technology — bad implementation. Teams underestimate the data quality requirements, the integration complexity, and the organizational change management needed to make agentic systems work.

Challenge 2: Hallucination in test generation.

Because agentic systems rely on LLMs, they can generate test cases that look correct but test the wrong thing. Because agents rely on LLMs during testing, they are prone to hallucinations — making human oversight essential to identify and mitigate these risks. You can’t just trust the output blindly. You need experienced QA professionals reviewing agent-generated tests, especially in the early stages.

Challenge 3: Security and compliance risks.

Threat categories impacting AI agents include input manipulation and data poisoning, credential hijacking and abuse leading to unauthorized control and data theft, and agent deviation and unintended behavior due to internal flaws or external triggers that can cause reputational damage and operational disruption. If your agentic testing system has access to production data or critical systems, the attack surface matters.

Challenge 4: “Agentwashing.”

The most common misconception is referring to AI assistants as agents a misunderstanding known as “agentwashing.” Gartner flagged this specifically in their 2025 strategic trends report. A lot of what’s being sold as “agentic testing” is really just AI-assisted test generation which is useful, but a fundamentally different category. Ask vendors hard questions: Can the system reason about what to test, or does it just generate tests from a prompt? Can it adapt mid-execution when something unexpected happens? Can it learn across sessions?

Who Should Be Using Agentic AI Testing Right Now?

Not everyone. Let’s be honest about that.

Strong candidates:

- Enterprise software teams with complex, rapidly-evolving applications

- QA teams spending more than 50% of their time on test maintenance

- Companies building or testing AI-powered features (chatbots, recommendation engines, autonomous workflows)

- Organizations running continuous deployment with multiple releases per week

- Dev teams working with microservice architectures where regression risk is high

Wait-and-watch candidates:

- Small teams with simple, stable applications where traditional automation works fine

- Organizations without the infrastructure to integrate agentic systems into their CI/CD pipeline

- Teams that don’t have QA engineers experienced enough to validate AI-generated test logic

The research supports the phased adoption approach. A Gartner poll of 147 CIOs and IT function leaders found that 24% had already deployed AI agents, 50% were researching and experimenting, and 17% planned to deploy by end of 2026. The smart money is in the “experimenting” camp right now — piloting on one product or one test suite before committing fully.

The Tools Leading the Space in 2025

Without endorsing any single platform, here are the categories of tools worth evaluating:

Enterprise-grade platforms: Tricentis Tosca’s Agentic Test Automation, UiPath’s agentic testing capabilities, and EPAM’s Agentic QA™ (launched October 2025) represent the mature end of the market — battle-tested, well-integrated, expensive.

Mid-market options: Functionize, Virtuoso QA, and Testgrid are worth evaluating for teams that need agentic capabilities without enterprise-level contracts.

Open-source frameworks: AutoGPT, LangChain, and BabyAGI give technically sophisticated teams the building blocks to construct custom agentic testing systems. High flexibility, high setup cost.

For teams evaluating tools, MIT’s Computer Science and AI Laboratory (CSAIL) has published foundational research on autonomous agent evaluation frameworks that provides useful criteria for vendor assessment.

The AgentBench evaluation suite, developed by Tsinghua University researchers, is the closest thing to a neutral standard for measuring agentic AI capability — and it’s worth reviewing before you make any purchasing decisions.

What the Next 3 Years Look Like

The trajectory is pretty clear at this point. Gartner predicts 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% today. In the best-case scenario, agentic AI could drive approximately 30% of enterprise application software revenue by 2035, surpassing $450 billion.

For QA specifically: Gartner predicts that by 2028, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from zero percent in 2024.

And critically, the U.S. Bureau of Labor Statistics estimates that employment opportunities for software developers and testers will increase “much faster than average” until 2033, in part due to the way AI is fueling growth in digital products. Agentic AI isn’t shrinking the QA job market. It’s changing what QA engineers do, and increasing demand for people who can work with intelligent systems.

The engineers who understand how to orchestrate, validate, and improve agentic testing systems are going to be extraordinarily valuable. That’s not a prediction — it’s already happening.

Key Takeaways (For People Who Scroll to the Bottom)

What is agentic AI testing? It’s software quality assurance where autonomous AI agents independently plan, create, execute, and improve tests without human scripting or constant oversight.

How is it different from regular test automation? Traditional automation follows instructions. Agentic AI reasons about what to test, adapts when things change, and learns from every run.

Who needs it now? Teams with complex, fast-moving applications especially those building AI-powered features — will see the clearest ROI.

What are the risks? Hallucination in test generation, infrastructure requirements, and “agentwashing” from vendors selling AI-assisted tools as truly agentic systems.

What should you do today? Pilot on one test suite. Measure maintenance time before and after. Let the data tell you whether to expand.